【AAAI 2026 Invited Talk】 Quest of AI towards Specializable Generalist: From Reasoning to Scientific Discovery

2026-01-30

January 22, Professor Bowen Zhou, Director and Chief Scientist of Shanghai Artificial Intelligence Laboratory, delivered an invited talk at the 40th AAAI Conference on Artificial Intelligence (AAAI 2026) entitled Quest of AI towards Specializable Generalist: From Reasoning to Scientific Discovery.

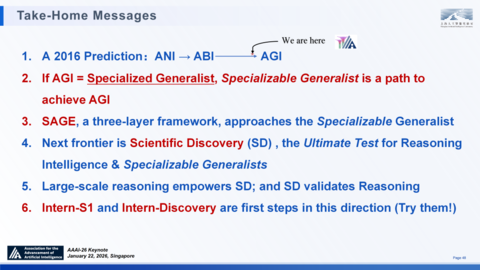

Zhou pointed out that we are now on the eve of Artificial General Intelligence (AGI), yet a critical link is still missing: Specialized Generalist Intelligence. There is an urgent need to drive the iterative evolution of Scientific Intelligence from 1.0 to 2.0, namely from AI for Science (AI4S) to AGI for Science (AGI4S).

He further proposed the following key insights:

• If AGI=Specialized Generalist, then Specializable Generalist stands for a feasible path to AGI.

• Core challenges and approaches for building specializable general models: Specialized models require low-cost, scalable dense feedback during training, the ability for continuous learning and active exploration, and the capacity to generate multi-perspective and multi-solution responses to a single problem.

• Developing a specializable general model demands breakthroughs across three core dimensions: Signal, Scale, and Ground.

• Scientific discovery is the next frontier for AI,and it serves as both the ultimate test for reasoning and the validation stage for "Specialized Generalist AGI".

• Large-scale deep reasoning will empower scientific discovery, and in turn, scientific discovery will feed back into the evolution of reasoning capabilities.

Based on the above insights, Shanghai Artificial Intelligence Laboratory (Shanghai AI Lab) has carried out a series of explorations and verifications. In his report, Professor Zhou elaborated on the Synergistic Architecture for Generalizable Expertsthe (SAGE), a unified architectural response to building "Specialized Generalist" towards AGI.

He also introduced the two core infrastructure pillars underpinning the exploration of AGI4S: Intern-S1 and Intern-Discovery, along with a series of relevant phased progress made in this field.

"If we compare SAGE to a map of a new world, we have currently established good initial verifications and many vanguard outpost." Zhou issued a call to action to the audience both on and off the venue: "The architecture is ready, but there is still a lot of blank space in the picture. We look forward to expanding the blueprint together with more peers!"

The full text of the report is provided below. For ease of reading, minor revisions have been made without altering the original meaning.

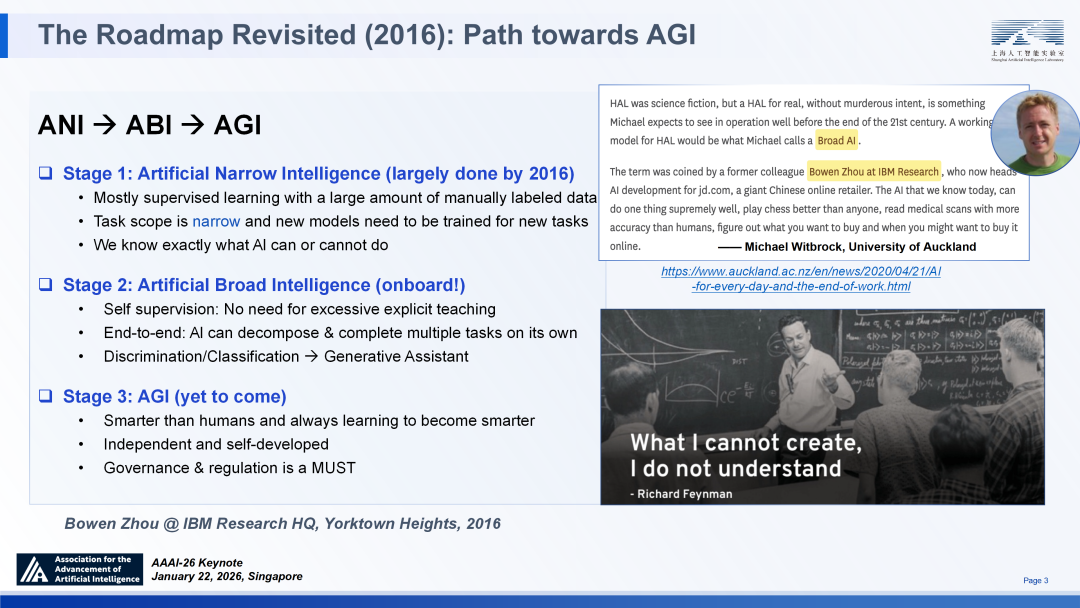

Evolutionary Projection: The Historical Leap from ANI to AGI

The development of artificial intelligence is not a linear accumulation of advancements, but a process marked by distinct phased leaps. Reflecting on the historical timeline of AI evolution helps us clarify our current position and future direction in this field.

I have been pondering the essence of intelligence since I first engaged in AI research back in 1996. Notably, during my tenure as the Dean of the IBM AI Foundations Research Institute, I proposed for the first time a strategic roadmap toward Artificial General Intelligence (AGI), which clearly defined three pivotal phases of AI development: Artificial Narrow Intelligence (ANI), Artificial Broad Intelligence (ABI), and AGI, with explicit definitions for each.

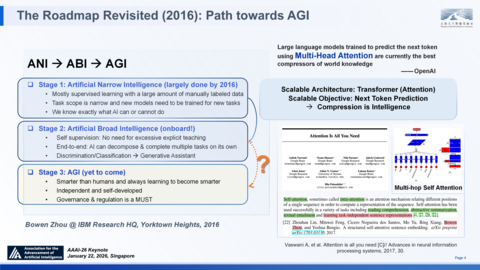

My judgment at that time was that ANI should be matured by 2016, and the path to AGI would not be a direct leap. Instead, it would require the prior realization of ABI, which possesses cross-domain generalization capabilities. We believed this transition would demand a fundamental shift in technical paradigms, encompassing at least three key aspects: a move from supervised learning to self-supervised learning, a shift from human-segmented task cascaded systems to end-to-end architectures, and an evolution from discriminative tools to generative assistants.

More than six years later, the launch of ChatGPT verified for the first time that an AI system could achieve all three of the above milestones simultaneously, essentially heralding the arrival of the ABI era. This historic breakthrough validated the effectiveness of the Scaling Law — by scaling the Transformer architecture and optimizing for a simple "Next Token Prediction" objective, we learned to compress world knowledge into Large Language Models.

On a personal note, I am humbled that our paper on the "multi-hop self attention" mechanism, which my colleagues and I proposed in 2016, has been cited and acknowledged by the seminal Transformer paper①, as one of the first work on "task-independent" sentence representation, playing a role in the compression intelligence in the era of pre-training.

Strategic Path: The Ultimate Test of Specialized Generalist and Scientific Discovery

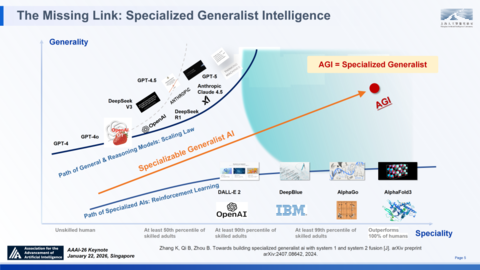

Empowered by the Scaling Law with broad generalization capabilities for large language models (ABI), we posed a pivotal strategic question in early 2023: Is the next step toward AGI merely a matter of computational scaling? Reflections on such questions led me to propose the "Specialized Generalist" path in 2023. The core idea was how to fuse System 1 and System 2 in a dynamic way for a large variety of real world tasks.

Redefining the Path to AGI

Over the past 70 years, the development of AI has long advanced separately along the two dimensions of "specialist" and "generality". Pre-LLM systems like Deepblue, AlphaGo and AlphaFold were "Narrow AI", yet they achieved expert or superhuman level performance in specific domains.

In contrast, LLMs possesse broad, super-human general capabilities, but often struggle to match the depth of human experts in specialized tasks. AGI must transcend this duality and build an intelligent architecture capable of dynamically fusing System 1 (intuitive fast thinking) and System 2 (logical slow thinking)— that is, while maintaining a universal cognitive foundation, it should be able to achieve expert-level mastery in any specific task through continuous learning and deep reasoning(We formalized these thoughts into a position paper released on ArXiv in 2024)②.

AGI = Specialized Generalist

The emergence of OpenAI o1 at the end of 2024 and DeepSeek-R1 in early 2025— which have markedly enhanced logical reasoning capabilities by applying large-scale Reinforcement Learning on top of LLMs—has powerfully validated the correctness of our prediction for the "Specialized Generalist" path. In Oct. 2025, Prof. Yoshua Bengio et al proposed a definition of AGI, decomposing it into ten core broad abilities alongside numerous narrow specialized ones. Solving all implies AGI. This framework aligns remarkably well with our "AGI = Specialized Generalist" definition. It suggests that the path we identified in 2023 is increasingly becoming a shared consensus across the community.

Scientific Discovery: The Ultimate Frontier of Reasoning Intelligence

What is the next Frontier? I believe it's Scientific Discovery(SD). Because besides all benefits such as curing cancers that AI4S has promised, in my opinion, SD is the Ultimate Test for Reasoning Intelligence, hence the absolute frontier for our AI to AGI. SD is a complex interplay of the known and unknowns, encompassing the entire process from hypothesis generation and experimental validation to theoretical synthesis. It poses a triple ultimate challenge to artificial intelligence:

Known Unknowns: A typical example is the combinatorial explosion. For instance, in molecular design or material science, we face the search space of 10^60 configurations, far exceeding what traditional methods can handle.

Unknown Unknowns: It provides the perfect testbed for validating an AI's ability to generalize Out-of-domain Distributions.

Sparse and Delayed Rewards:The cyclical nature of SD introduces severe challenges pushing the limits of current reasoning RL③.

Therefore, SD is not a great application of AI, but a powerful force driving "Specialized Generalist" towards AGI.

Next, I would like to share SAGE, our unified architectural response to this challenge.

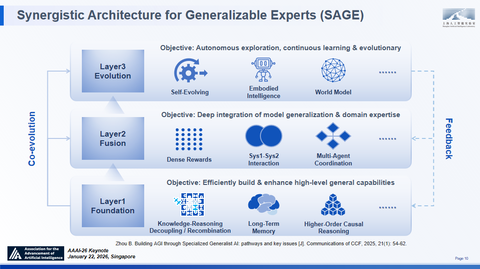

A Proposal: The Synergistic Architecture for Generalizable Experts

How do we tackle the combinatorial explosion and "Unknown Unknowns" in scientific discovery? And how do we build this "Specialized Generalist"?

We propose Synergistic Architecture for Generalizable Experts(SAGE)④. It's not just a stack of models; it is a unified cognitive ecosystem designed to bridge the chasm between broad "Generalization" and deep "Specialization". And it consists of three logically coupled layers, operating in a continuous loop of cognitive evolution.

Foundation Layer: This layer aims to structurally decouple Knowledge from Reasoning. By introducing intrinsic mechanisms for efficient long-term memory, we free the model from rote memorization, providing the upper layers with a cleaner, more flexible canvas for compositional generalization and higher-order causal reasoning.

Fusion Layer: This layer uses dense process rewards and multi-agent collaboration to dynamically orchestrate the rhythm between intuitive "fast thinking"(for breadth) and logical "slow thinking"(for depth). It decides when to generalize and when to specialize.

Evolution Layer: This layer represents a paradigm shift from "Passive Data Fitting" to "Active Epistemic Exploration". It empowers the model to actively interact with the environment, hypothesize in Out-of-Distribution scenarios, and autonomously correct its own world model based on real-world feedback.

Crucially, instead of being static, SAGE operates as a Recursive Ecosystem. We have Bottom-up Support, where decoupled representations fuel reasoning strategies. Simultaneously, we have Top-down Injection, where high-value feedback from active discovery flows back down, converting "unknowns" into new training signals. Through this bidirectional loop, SAGE achieves Full-Stack Self-Improvement, evolving not just its parameters, but its cognitive strategies.

The Synergistic Architecture for Generalizable Experts(SAGE)

Foundation Layer

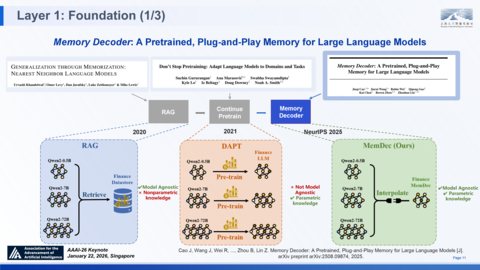

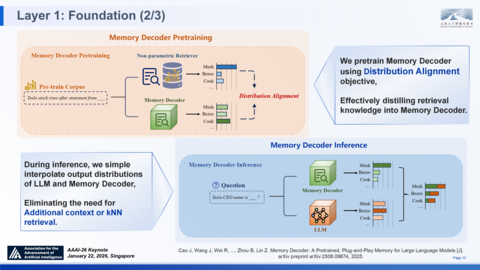

The foundation layer of SAGE is designed to address the issue where current LLMs often entangle facts with reasoning. For example, the memory decoder⑤, to address the following problems with current architecture:

• Widely-used RAG/retrieval augmentation of LLM can incorporate external knowledge, but retrieval during inference with long contexts introduce latency and tremendous engineering overhead.

• While domain adaptive pretraining requires full-parameter training, which is costly and prone to catastrophic forgetting.

As a pretrained, Plug-and-Play memory component, the Memory Decoder innovatively adopts a mechanism that runs in parallel with the base model, with fused output distributions. It is the first method to replace traditional non-parametric retrievers with a compact parametric model while retaining the interpolation-enhanced paradigm. Experimental data shows that its inference overhead is only 1.28 times that of the base model, significantly lower than that of existing mainstream solutions. In the SAGE framework, innovations such as Memory Decoder primarily supports the foundational layer, enabling "decoupled yet integrable inference & knowledge," while simultaneously strengthening "long-term memory".

Memory Decoder: A Pretrained, Plug-and-Play Memory for Large Language Models

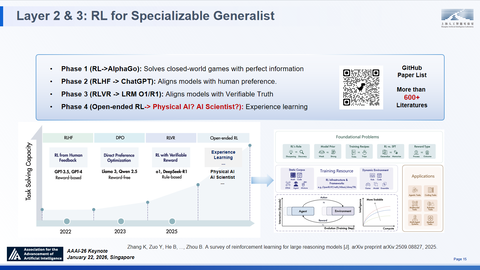

Reinforcement Learning

Built upon the Foundation Model, Reinforcement Learning(RL) is the critical methodology for realizing the "Specializable Generalist", which serves as the bridge in the SAGE to enable the Fusion Layer's reasoning and the Evolution Layer's continuous adaptation. Looking back at its evolutionary journey, RL has evolved from early Closed-World Games (e.g., AlphaGo) to Human Alignment (RLHF). It is now in the stage of Verifiable Reasoning (RLVR) represented by o1 and DeepSeek-R1, and will ultimately move toward a new era of Open-Ended RL for the physical world and scientific discovery.

RL for Specializable Generalist and The Three Pillars of Reasoning RL

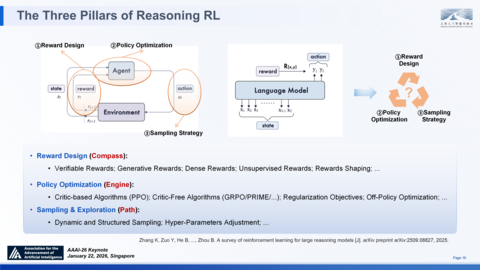

At the micro-mechanistic level, RL is categorized into three core pillars. Reward Design acts as a compass guiding what we specialize our models for. Policy Optimization acts as an engine defining how the model moves forward. Sampling & Exploration decides the path along which our model evolves to specialize⑥.

Obviously, given the complex nature of various tasks, there are many different suitable RL methods for different task, the real challenge lies in Unification. How do we integrate the best Reward, Policy, and Sampling strategies into one cohesive system, the specialiable generalist?

Fusion Layer

With RL established as a key bridge to the Fusion Layer, I will next present several of our recent explorations and advances within this layer.To build a "Specializable Generalist", we must overcome three core challenges that traditional RL faces in complex reasoning tasks: exorbitant supervision costs, entropy collapse during training, and mode collapse of single reasoning paths. To this end, we have introduced three paradigm-defining algorithmic innovations at this layer, which are designed to construct a dense reward mechanism, sustain continuous exploration capability, and stimulate the diversity of reasoning paths.

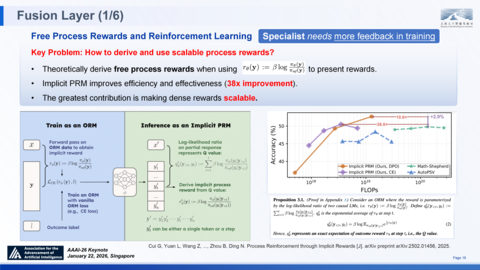

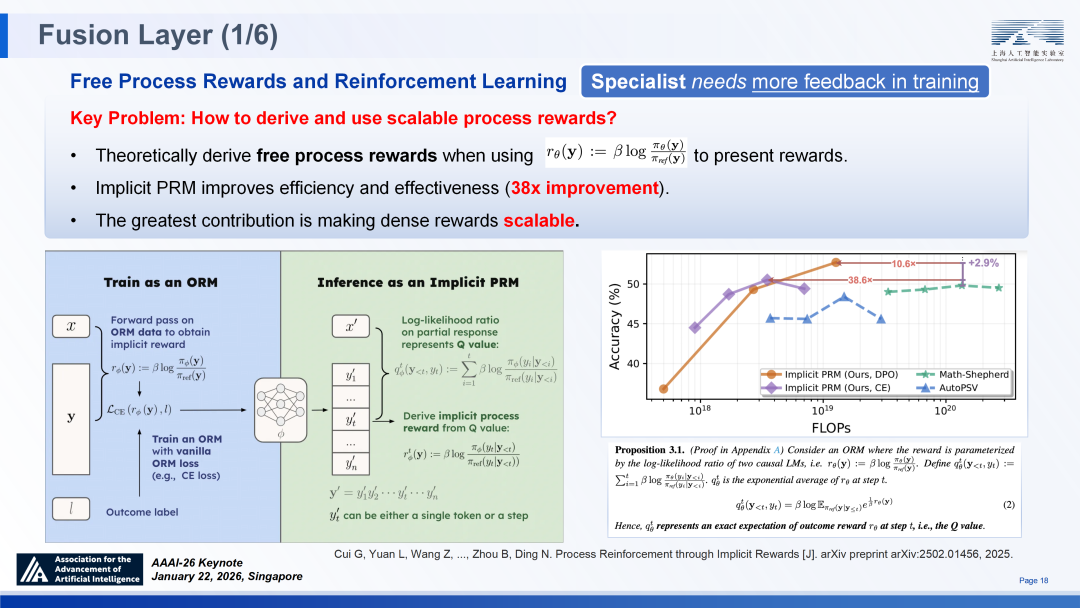

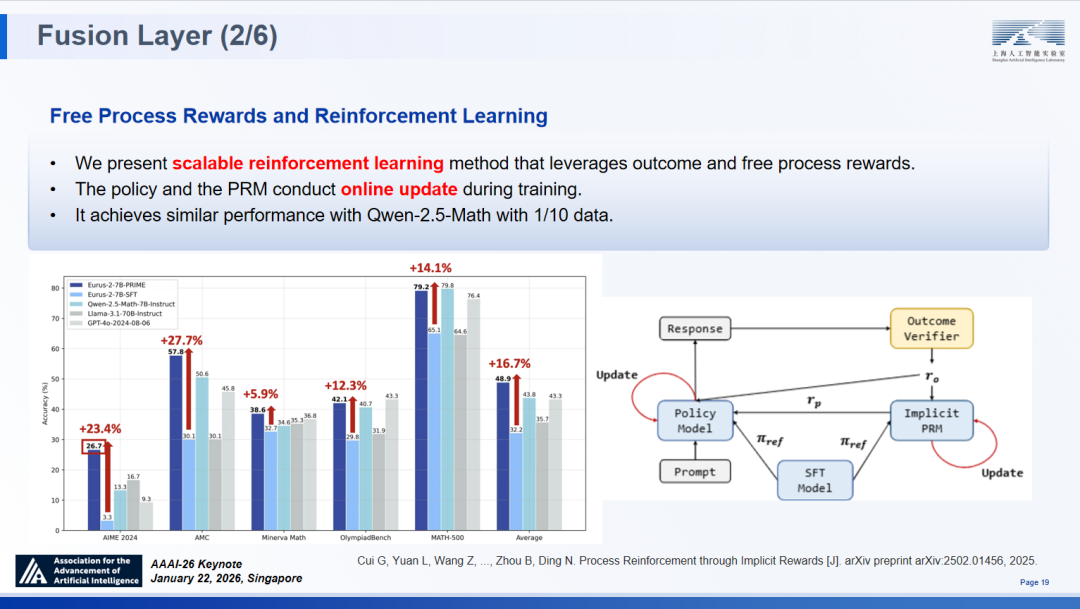

PRIME

To address the contradiction between the demand for high-density supervision and exorbitant annotation costs, we propose the PRIME⑦ ,is about "free" process rewards to improve RL with dense rewards. Its core insight lies in leveraging the statistical divergence between the policy model and the reference model. By formulating the model training objective to reward the model based on the log-likelihood ratio of the two models, we provide a mathematical proof that the model can implicitly learn the Q-function. This means that, without the need for explicit training of large-scale PRM models, the agent can directly derive dense, step-by-step reward signals at each reasoning step by evaluating the quality of actions under the current state.

Free Process Rewards and RL(PRIME)

This innovation delivers remarkable, multi-dimensional advantages:

• Computational efficiency: It allows us to derive dense, step-by-step signals during inference without training a separate, heavy PRM. Implicit PRM achieves significantly higher accuracy with greater compute efficiency compared to previous methods like Math-Shepherd.

• System Architecture: We implemented a scalable RL method where the Policy Model & the Implicit PRM conduct online updates together, leveraging both outcome verifiers and the "free" process rewards derived in the previous step.

• Data Efficiency: This achieves performance parity with SOTA models but requires only 1/10th of the training data.

Benchmarking results strongly validate the effectiveness of PRIME: we see a +23.4% gain on AIME 2024, +27.7% on AMC, and significant lifts on MATH-500, validating that the dense yet implicit rewards drive superior reasoning capabilities.

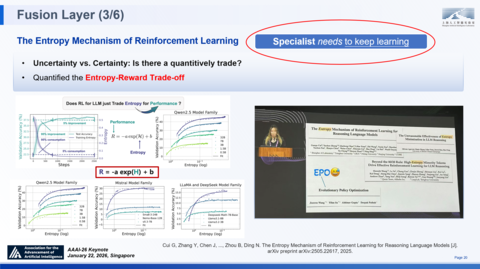

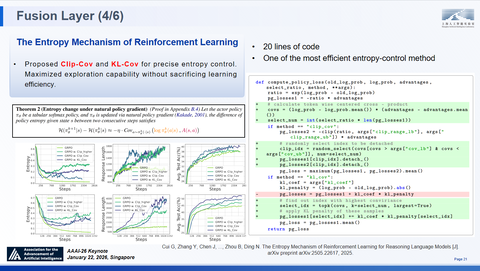

Training an expert model requires not only feedback, but also continuous learning. As we delved deeper into RL for reasoning, we encountered a fundamental challenge: "entropy collapse" . Put simply, this boils down to figuring out how to enable a universal model to maintain exploration and curiosity throughout the expert specialization process—much like top human experts, who avoid becoming overconfident too early when tackling professional challenges and instead "stay hungry, stay foolish".

During training, as a model’s performance sees initial improvement, its policy entropy tends to drop sharply. This decline means the model’s confidence in its outputs rises rapidly, leading it to converge prematurely on local optima and thus lose the ability to explore better reasoning paths. Experimental data shows that entropy depletion is concentrated primarily in the first few hundred training steps; after this point, the model’s performance gains quickly enter a phase of diminishing marginal returns. This phenomenon closely mirrors overconfidence in human cognition: a state of complacency that halts active exploration of subtle differences in problems—yet such active exploration is precisely the key to evolving a universal model into a specialized expert model capable of capturing underlying deep-seated patterns.

Performance gains often come together with a rapid drop in policy entropy—so the model becomes more certain, but also less exploratory. Empirically, entropy is "consumed" very early in training: a large fraction of both entropy loss & performance improvement happens within the first few hundred steps, and after that we see diminishing returns. This is like our AI generalist becoming too confident and stopping its active search for better solutions, which is critical for the model to be a specialist to discover nuances.

To further specialize the generalist, we highlight a simple but important observation on this Entropy-Reward Trade-off. Simply put, the validation performance(R) follows a log-linear relationship with entropy (H)⑧. This is simple but could lead to many superior designs. For example, if we want scalable reasoning RL for our specializable generalist, the core problem is not simply "train longer", but "manage entropy consumption" so the model keeps enough uncertainty to explore better reasoning paths.

By the way, Yejin Choi highlighted this work in NeurIPS 2025 when talking about the art of scaling, a great topic.

The entropy Mechanism of RL

FlowRL

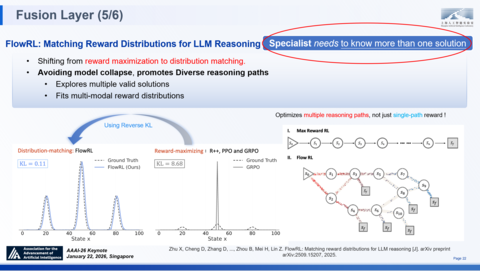

True experts can not only solve problems, but also offer multiple solutions to the same problem, which is the same holds for expert models. However, existing standard reinforcement learning methods (e.g., PPO, GRPO) universally adopt reward maximization as their sole objective. Such a focus is highly prone to causing mode collapse in complex reasoning tasks, where models tend to repeatedly converge to a single, known successful path while neglecting other potential optimal or diverse solutions.

The KL divergence between the distribution generated by traditional RL methods and the target distribution reaches as high as 8.68, presenting an extreme peak—this indicates an extremely narrow exploration space for the model. To endow models with genuine expert-level cognitive diversity, we introduce FlowRL⑨ at the fusion layer: an innovative work drawing on the ideas of Generative Flow Networks (GFlowNets), which marks a paradigm shift in the optimization logic of reinforcement learning.

FlowRL proposes to change the learning objective from reward maximization to distribution matching.Instead of merely pursuing a single high-score solution, the model strives to learn the probability distribution of all valid reasoning paths.

• Distribution Fitting: The distribution generated by FlowRL can capture the vast majority of probability mass in the target distribution and fit multiple modes. As shown by the smooth curve on the left, its KL divergence is drastically reduced to 0.11, significantly outperforming traditional methods.

• Diverse Generation: The learned policy naturally fosters the generation of more diverse paths during reasoning, thereby achieving stronger robustness when confronted with unknown unknowns.

As we can see from these results, FLOW shows clear advantages to hard problems. This can also be seen from a case study. We compared FLOW with GRPO on a mathematical problem: GRPO fails to solve it, while FLOW explores diversified paths of actions and finally reaches the correct answer, 721.

This demonstrates that distribution matching enables more flexible reasoning than reward maximization.

Overall experimental results further validate the superiority of FlowRL:

• Accuracy Improvement: Trained on a 32B model, FlowRL achieved an accuracy of 48.39% on mathematical reasoning tasks, representing a 10-percentage-point increase over GRPO and a 5.1-percentage-point increase over PPO.

• Competition-Level Performance: After training on purely open-source data, FlowRL attained a rating of 1549 on the CodeForces platform, with performance on par with the o1-preview model.

• Doubled Diversity: The diversity score of solutions generated by FlowRL reached as high as 2.28, approximately twice that of PPO.

匹配大语言模型推理的奖励分布(FlowRL)

Evolution Layer

Finally, let's zoom into the Evolution Layer. The key challenge at this layer is to build a self-evolving "Specializable Generalist". How do we achieve continuous learning across self-evolution, large-scale tasks, and even physical environments? To address this challenge, we have developed a comprehensive evolutionary mechanism from three critical dimensions: Signal, Scale, and Ground.

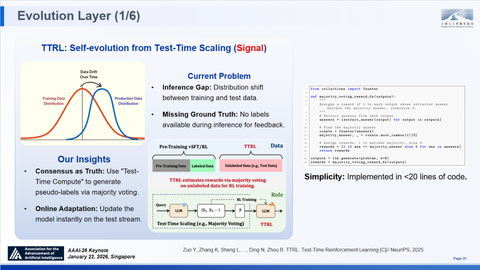

TTRL: Self-evolution from Test-Time Scaling (Signal)

During inference, the core problem is the distribution shift between training and test data.Once deprived of guidance from ground-truth labels, traditional models stop learning. True "experts", however—much like the human species—possess the ability to continuously learn and adapt in any unforeseen circumstances.

To address this pain point, we propose the Test-Time Reinforcement Learning (TTRL) framework⑩, whose core insight is founded on a simple hypothesis: Consensus implies correctness.

Test-Time Reinforcement Learning (TTRL)

Empirical results validate the remarkable potential of TTRL.On AIME 2024, a Qwen-2.5-Math-7B model achieves a relative accuracy gain of 159% using TTRL. Crucially, we observe Self-Transcendence. The TTRL-updated model not only outperforms its own Best-of-N baseline, but even approaches the performance upper bound of training on test data with ground truth labels (the Oracle baseline).

Back to SAGE, This mechanism realizes the Self-Evolution core, proving that intelligence can indeed spiral upwards autonomously.

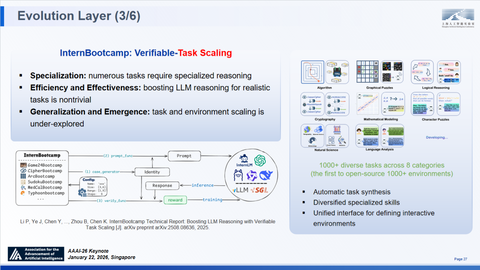

InternBootcamp: Verifiable Task Scaling

Having addressed the signal question of how to learn, we must now answer the scale question of where to learn.A specializable generalist needs to specialize over a large number of tasks. Boosting reasoning simultaneously for these realistic tasks is non-trivial. Furthermore, we aim to explore deeper questions: Does a scaling law exist specifically for the number of tasks? What happens when we scale up the number and diversity of tasks?

We hence propose InternBootcamp⑪, a platform designed for verifiable task specialization, and is the first to provide over 1,000 diverse environments across 8 categories, enabling RL training in specified environments to significantly boost model performance. InternBootcamp is also equipped with the capability to automatically generate tasks and their corresponding verification functions, which allows for the rapid scaling of tasks and environments, also allows users to easily transform specialized tasks (e.g., circuit design) into verifiable environments where correctness is confirmed through simulation.

InternBootcamp covers 1,000 diverse tasks across 8 categories

Experiments based on InternBootcamp have revealed two important phenomena.

Emergent Capabilities: InternBootcamp increased the performance of Qwen2.5-32B from 24.4 to 59.5 on BootcampEVAL, averaged across an evaluation set of 118 tasks.More crucially, certain logical tasks that were unsolvable via single-task training became solvable after mixed specializable training on over 500 tasks. This suggests inter-task correlations enhance the model's comprehension.

Task-Scaling Law: A continuous upward trend in performance was observed when scaling the number of task types from 8 to 512, confirming the existence of a scaling law associated with task growth.

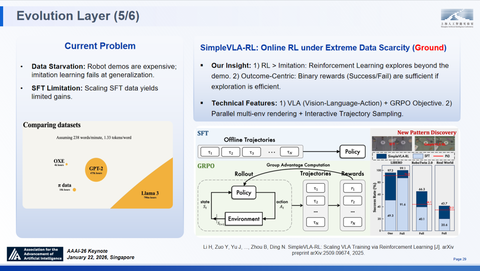

SimpleVLA-RL: Online RL under Extreme Data Scarcity (Ground)

True evolution must happen in the physical world.The current bottleneck of Embodied AI is Data Starvation. Robot demonstrations are expensive, and simply scaling Supervised Fine-Tuning (SFT) data yields diminishing returns.Our insight is twofold: Firstly, RL could outperform Imitation as it explores beyond the demonstration. Secondly, Outcome-Centric binary rewards, simple Success or Fail , are sufficient as signals to learn if the exploration is efficient.

Building on this, we implement the SimpleVLA-RL⑫ framework, using a VLA (Vision-Language-Action) model with a GRPO objective, supported by parallel multi-environment rendering for interactive trajectory sampling.

SimpleVLA-RL:Online RL under Extreme Data Scarcity

The results challenge conventional wisdom on data efficiency. We found that 1-trajectory SFT combined with RL achieves a 96.9% success rate, outperforming full-trajectory SFT. We also see a 21% gain in Sim-to-Real transfer success rates on tasks like Stacking Bowls. Most excitingly, we observe Emergent Behavior: the robot discovers novel pushing strategies through RL that were never demonstrated. Unlike standard SFT, there is no catastrophic forgetting; the model maintains its general capabilities. Building on this, we recently implemented real-world RL on long-horizon dexterous tasks, witnessing a 300% relative improvement over SFT, along with surprising auto-recovery capabilities.

Thanks to SimpleVLA-RL framework, we actually achieve results comparable to Physical Intelligence’s Pi 0.6* with very little data and computation. This completes the SAGE architecture. It connects the "Brain" of reasoning to the "Body" of action, enabling true Embodied Evolution.

After nearly two years of solid exploration, SAGE has moved beyond the theoretical conception phase and completed full-stack validation. At the foundation layer, MemoryDecoder achieves the structural decoupling of memory and computation; at the fusion layer, PRIME and FlowRL address the challenges of supervision scarcity and reasoning singularity; at the evolution layer, TTRL, InternBootcamp and SimpleVLA-RL construct a closed loop from test-time reinforcement to "embodiment" evolution.

From AI4S (1.0) to AI4S (2.0) ( = AGI4S)

AI4S has been the headline for a while. Existing success, including protein folding, weather forecasting, generic models, and material generation, with AlphaFold as the postchild of AI4S. While these works show significant progress in specific domains, a recent Nature paper points out that AI constrains the boundaries of novel knowledge and thus harms innovation.This confirms our core viewpoint: Deep learning excels at tackling well-defined tasks with abundant data, which limits the diversity and innovation of research.

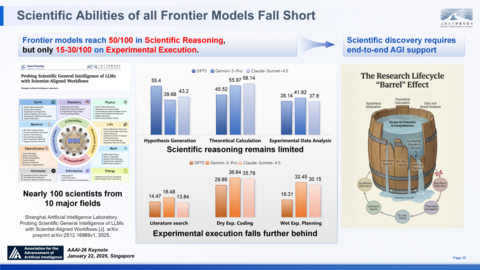

There is other evidence to support the view that today's AI4S is insufficient. By evaluations designed by 100 scientists across 10 different major scientific fields as listed here, the result shows that while frontier models reach scores of 50 in general reasoning in sciences, their performance drop to just 15-30 in specialized reasoning tasks related to all Experimental Execution ranging from specific literature search, dry experiments coding or wet lab experiments planning⑬.

As the "Barrel Effect" illustrates, the entire SD lifecycle is only as strong as its weakest link. The gap we have today hence highlights the urgent need to integrate general and specialized reasoning capabilities to drive true SD.

Scientific Abilities of all Frontier Models Fall Short、

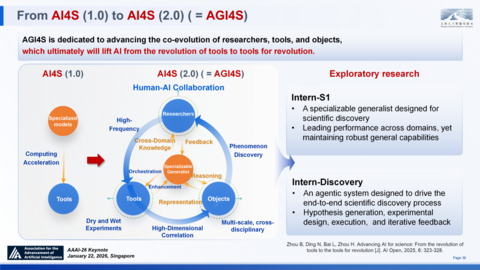

We need to transform from today's AI4S, we call it 1.0 to its 2.0 version, aka, AGI4S. This is dedicated to driving the co-evolution of researchers, research tools, and research objects. The complex and iterative interplay of these 3 defines the essence of entire lifecycle of true frontier research. We believe that AGI could systematically improves this entire dynamic system as a whole, which ultimately will lift AI from the revolution of tools to tools for revolution in SD⑭.

From AI4S (1.0) to AI4S (2.0) ( = AGI4S)

Intern-S1: A Specializable Generalist for Science

To achieve that of course requires a lot more exciting research in AI, I am gonna report two steps we have accomplished with our teams. The first is Intern-S1⑮, a specializable generalist designed for a variety of scientific tasks. It integrates a few additional innovations in SAGE's three layers.

Let's start with the Foundation Layer:

• It features a dynamic tokenizer & specialized encoders to process 10+ scientific modalities, e.g., time-series, DNA, molecular structures, protein, sensing data. On the bottom is an example of how the dynamic tokenizer works.

• The dynamic tokenizer achieving up to 1.7x compression ratios on scientific data (SMILES format) compared to general models like GPT-OSS or DeepSeek-R1.

• Trained on 2.5T scientific tokens across domains, generated by optimized PDF parsing and scientific website mining pipelines.

On the Fusion Layer:

• End-to-end complex scientific tasks demand comprehensive skills, such as calculation, reasoning, idea generation and experiment design. From RL training perspective, these skills require different types of reward.

• So we integrate multiple RL algorithms and techniques into a unified framework, such as the entropy mechanism presented earlier, and jointly optimized infrastructure and training recipes, and hence able to stabilize the RL training for weeks.

• This framework, namely MoR, balances the convergence speed of RL training across different tasks, mitigating overfitting to specific tasks & effectively enhancing the model's generalization across various difficulty levels and domains, making Intern-S1 more capable of solving diverse reasoning tasks in scientific domains.

On the Evolution Layer:

• We propose InternBootcamp, a framework for interactive learning from specialized scientific environments, and transform specialized tasks into acquired expertise.

• Interaction with environments is essential for specialized scientific tasks. For example, retrosynthesis is an important task in materials science, and it is typically a multi-round, trial-and-error process; the model needs to interact with the simulator and design tools to adjust the solution.

Intern-S1 demonstrates comparable performance with SOTA open-source models on general capabilities, while in scientific performance across 9 major fields including chemistry, biology, and materials science, it comprehensively outperforms top closed-source models such as GPT-5 and Grok-4.

Intern-Discovery

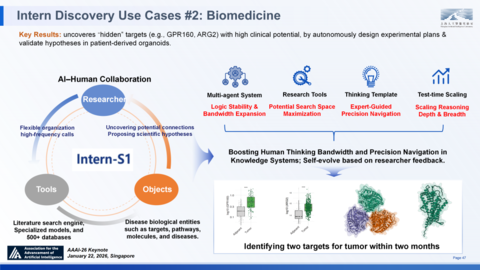

Another step we took for AGI4S is Intern-Discovery, which builds an agentic framework to dynamically connect S1 with abundant scientific data, 2000+ professional tools, databases, knowledge graphs, and verification environments.

The core logic of Intern-Discovery lies in establishing a two-way loop between "agent generation" and "agent verification": the former proactively identifies phenomena, proposes hypotheses, and designs experiments; the latter verifies hypotheses through simulation and physical experiments, and transmits feedback back to revise cognition.

To support this complex process, the system has introduced two key pillars:

• Science Context Protocol (SCP) ⑯:Addressing the shortcomings of the existing MCP protocol in scientific resource integration, the SCP defines domain-specific structures and coordination mechanisms, enabling standardized scheduling and full-lifecycle management of datasets, wet-lab equipment, and complex workflows.

• Hierarchical Memory Module:Through the collaboration of Strategic Procedural Memory (SPM), Task Episodic Memory (TEM), and Semantic Knowledge Memory (SKM), the system can precipitate high-level research patterns, record experimental details, and integrate long-term knowledge, thereby avoiding logical hallucinations in continuous iteration.

Cases with Intern-Discovery

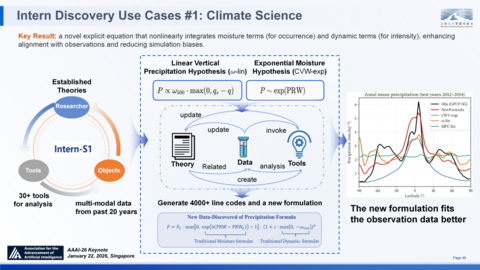

Intern-Discovery has demonstrated the potential of a "revolutionary tool" in the fields of climate science and biomedicine.

In the field of climate science, facing the extremely complex nonlinear interactions in precipitation prediction, Intern-Discovery independently calls more than 30 types of tools and analyzes 20 years of multimodal data. It wrote over 4,000 lines of professional code, successfully identifying the correlation between water vapor and dynamic terms that was overlooked by human experts, and derived a concise new explicit nonlinear equation. This equation is not only elegant and concise in form, but also significantly improves simulation accuracy, effectively correcting long-standing systematic biases, proving the agent's creativity at the theoretical construction level⑰.

Intern Discovery Use Cases #1: Climate Science

In the field of biomedicine, the virtual disease biologist "OriGene" integrates multi-source data such as genetics, proteomics, and clinical literature by imitating the thinking templates of human scientists. Even under data-sparse conditions, it still successfully discovered and verified hidden targets with high clinical potential, demonstrating full-process intelligent capabilities from data to mechanisms and from hypotheses to verification.

Intern Discovery Use Cases #2: Biomedicine

Recap: Putting the Pieces Together

In summary, we are on the eve of achieving AGI. If AGI = Specialized Generalist, then a general model capable of deep specialization (Specializable Generalist) is a feasible path to AGI, and the three-layer technical framework of "Sage" SAGE is the core architecture driving the development of the latter.

The next frontier is scientific discovery — it is not only the ultimate testing ground for reasoning intelligence, but also a verification stage for "integration of generality and specialization". Large-scale reasoning will empower scientific discovery, and scientific discovery will in turn feed back the evolution of reasoning capabilities.

Intern-S1 and Intern-Discovery are the first practical steps towards this direction, but all of these are only initial prototypes. If we compare the SAGE architecture to a map of a new world, we have currently established good preliminary verification and many vanguard outposts, but there are still vast "blank areas" on this map.

The architecture is ready, but the canvas is still largely blank.

If these preliminary results intrigue you, I invite you to dive into our papers and code. They are all open-source. But more importantly, I invite you to join us in filling in these blanks. Let's build the rest of SAGE together.

Thank you!

References

① Vaswani A, et al. Attention is all you need [C]// Advances in neural information processing systems, 2017, 30.

② Zhang K, Qi B, Zhou B. Towards building specialized generalist ai with system 1 and system 2 fusion [J]. arXiv preprint arXiv:2407.08642, 2024.

③ Qi B, Zhang K, Tian K, ..., Zhou B. Large language models as biomedical hypothesis generators: a comprehensive evaluation [C]. COLM, 2024.

④ Zhou B. Building AGI through Specialized Generalist AI: pathways and key issues [J]. Communications of CCF, 2025, 21(1): 54-62.

⑤ Cao J, Wang J, Wei R, ..., Zhou B, Lin Z. Memory Decoder: A Pretrained, Plug-and-Play Memory for Large Language Models [J]. arXiv preprint arXiv:2508.09874, 2025.

⑥ Zhang K, Zuo Y, He B, ..., Zhou B. A survey of reinforcement learning for large reasoning models [J]. arXiv preprint arXiv:2509.08827, 2025.

⑦ Cui G, Yuan L, Wang Z, ..., Zhou B, Ding N. Process Reinforcement through Implicit Rewards [J]. arXiv preprint arXiv:2502.01456, 2025.

⑧ Cui G, Zhang Y, Chen J, ..., Zhou B, Ding N. The Entropy Mechanism of Reinforcement Learning for Reasoning Language Models [J]. arXiv preprint arXiv:2505.22617, 2025.

⑨ Zhu X, Cheng D, Zhang D, ..., Zhou B, Mei H, Lin Z. FlowRL: Matching reward distributions for LLM reasoning [J]. arXiv preprint arXiv:2509.15207, 2025.

⑩ Zuo Y, Zhang K, Sheng L, ..., Ding N, Zhou B. TTRL: Test-Time Reinforcement Learning [C]// NeurIPS, 2025.

⑪ Li P, Ye J, Chen Y, ..., Zhou B, Chen K. InternBootcamp Technical Report: Boosting LLM Reasoning with Verifiable Task Scaling [J]. arXiv preprint arXiv:2508.08636, 2025.

⑫ Li H, Zuo Y, Yu J, ..., Zhou B, Ding N. SimpleVLA-RL: Scaling VLA Training via Reinforcement Learning [J]. arXiv preprint arXiv:2509.09674, 2025.

⑬ Shanghai Artificial Intelligence Laboratory. Probing Scientific General Intelligence of LLMs with Scientist-Aligned Workflows [J]. arXiv preprint arXiv:2512.16969v1, 2025.

⑭ Zhou B, Ding N, Bai L, Zhou H. Advancing AI for science: From the revolution of tools to the tools for revolution [J]. AI Open, 2025, 6: 323-328.

⑮ Shanghai AI Laboratory. INTERN-S1: A SCIENTIFICMULTIMODAL FOUNDATION MODEL [J]. arXiv preprint arXiv:2508.15763, 2025.

⑯ Jiang Y, Lou W, Wang L, ..., Zhou B. SCP: Accelerating Discovery with a Global Web of Autonomous Scientific Agents [J]. arXiv preprint arXiv:2512.24189, 2025.

⑰ Guo Z, Wang J, Ling F, ..., Zhou B, Bai L. A Self-Evolving AI Agent System for Climate Science [J]. arXiv preprint arXiv:2507.17311v3, 2025.